MiniMax at Harvard XR 2026: Multimodal AI for the Next Generation of Spatial Computing

On April 11th, MiniMax joined over 20 speakers and hundreds of attendees at Harvard University for HXR 2026, the annual conference organized by the Harvard GSD XR Club. This year's theme, "XR+: From Pixel to Voxel," centered on a question the industry has been circling for a while: what happens when extended reality stops being a flat screen trick and starts behaving like actual space?

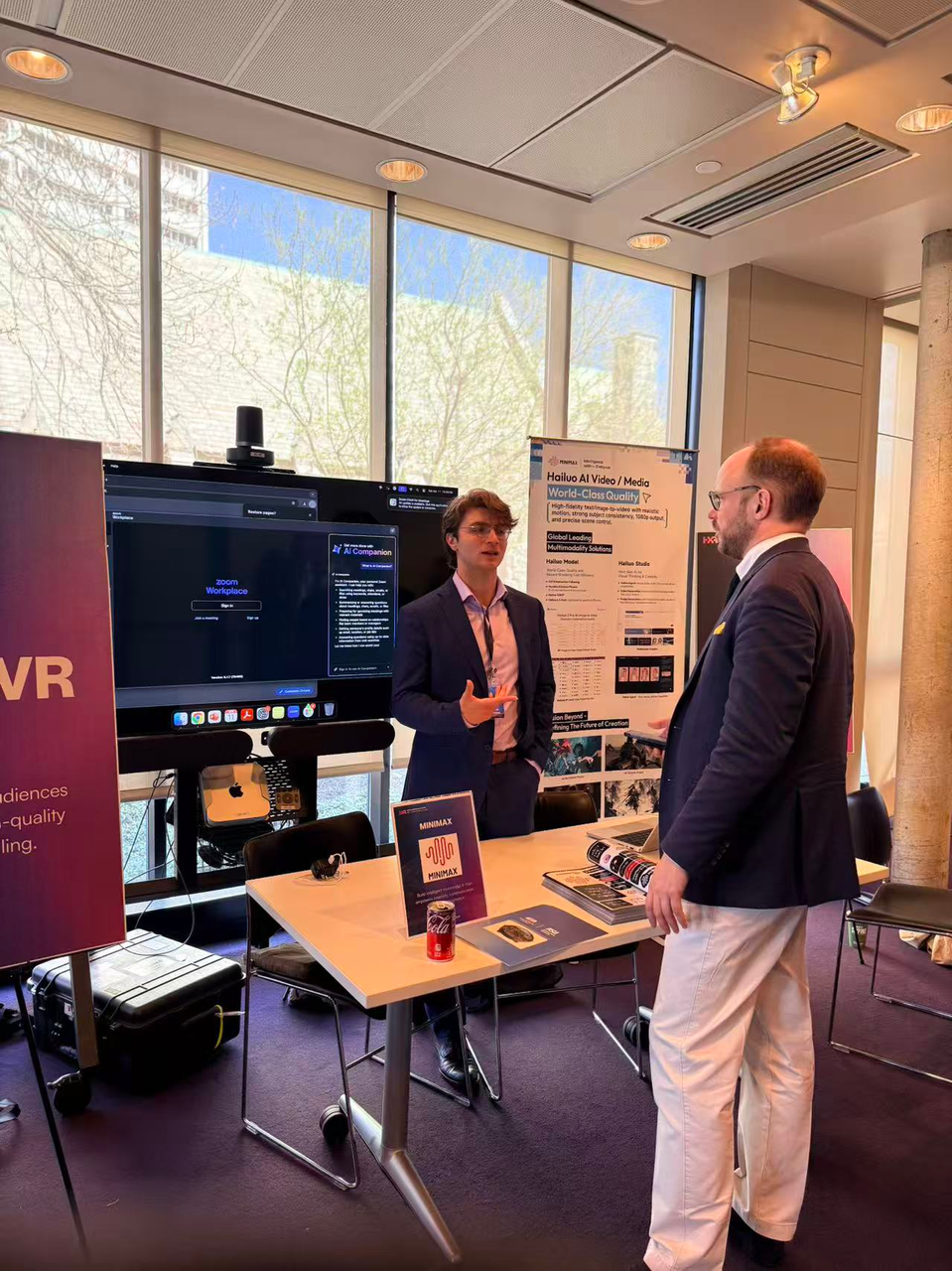

Gibran Mourani, speaking on behalf of MiniMax, presented the company's multimodal AI product suite to an audience of XR builders, researchers, medical professionals, and startup founders who are actively shipping immersive technology.

Multimodal AI Across the XR Stack

The core of the presentation was straightforward: MiniMax builds multimodal AI models across text, speech, video, and music, and XR developers need all of them.

Right now, most XR applications rely on a patchwork of separate tools for generating visuals, handling voice, producing audio environments, and processing natural language. MiniMax's model suite can collapse that stack into a single API platform.

The applications span a wide range of industries. Several verticals were highlighted where MiniMax's models can accelerate what XR teams are already building:

• Healthcare: surgical training simulations, therapeutic interventions, clinician education

• Tourism: virtual site visits with generated environments and narrated audio

• Neurological research: AI-assisted visualization of neural data in three-dimensional space

• Interactive training: voice-driven instructional environments for enterprise and education

For a solo developer or a five-person startup, this kind of consolidation means fewer integration headaches, faster prototyping, and the ability to build experiences that used to require a team of twenty.

The gap between what XR promises and what XR delivers has always been a compute and tooling problem. Multimodal AI closes that gap with models that are available and cost-effective today.

Showcase and Pitch Competition

The talks were strong, but the demos stole the day. Twelve teams were selected from hundreds of applications to demo their work at the conference showcase. HXR also hosted its first-ever pitch competition, and the energy was real. These were people with functioning prototypes, ready to build.

Gibran Mourani served as one of 14 judges for the competition alongside Amy Peck, Alvin Wang Graylin, Ori Inbar, Lisa Park, Shelley Peterson, and others.

Where Multimodal AI Meets XR

MiniMax showed up at HXR for a specific reason. The XR community is building the interface layer between humans and machines, the literal surfaces and spaces where people will interact with AI. If MiniMax's models are going to power the next generation of immersive applications, the company needs to be in the room with the people building them.

Every one of these applications needs natural language understanding, realistic voice, generated video, or ambient audio, and usually all four at once. MiniMax is an AI company, but the XR industry's hardest problems, making digital environments feel responsive, natural, and human, are exactly the problems multimodal AI was built to solve. The next phase of AGI development and the next phase of spatial computing are converging on the same goal: making the boundary between a person and a computer disappear.

To explore MiniMax's multimodal AI models and API platform, visit minimax.io.